You're a 16-year-old in a summer learning program in Chicago. You open LRNG — a platform your program uses to assign coursework and issue digital credentials. You're working through a playlist, which is what the platform calls a course. Each playlist has multiple activities. Complete them all, and you earn a badge — a credential proving you finished.

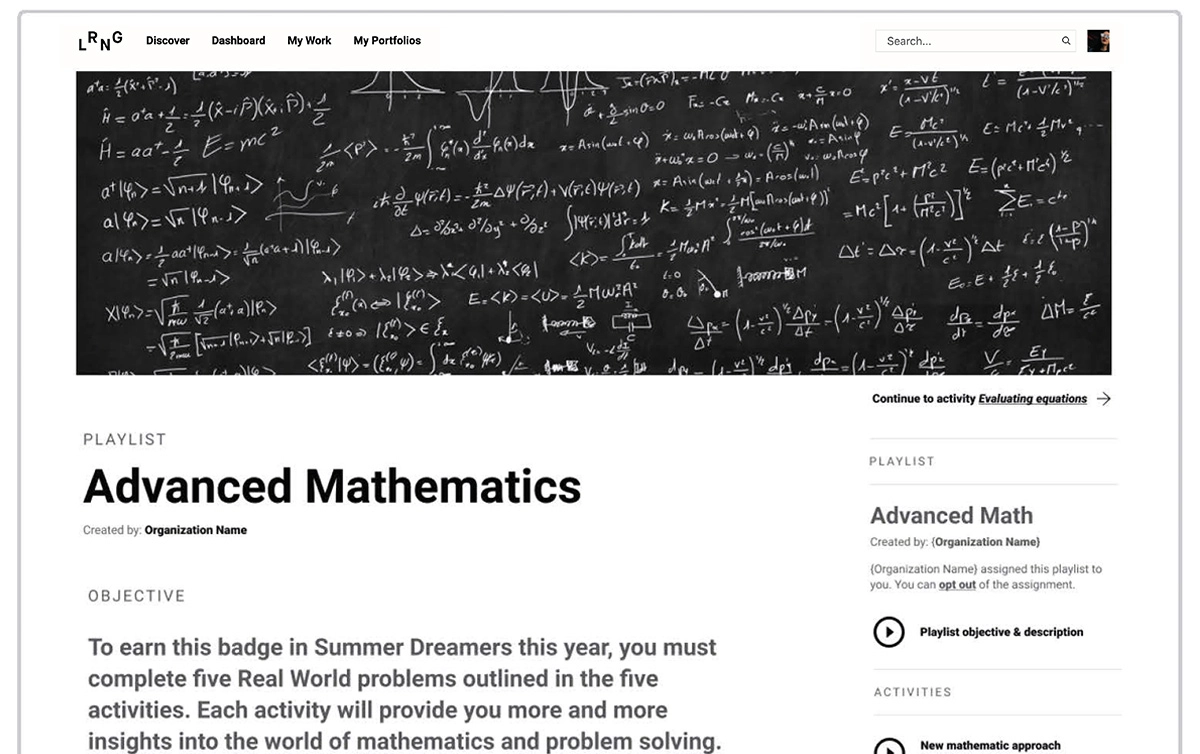

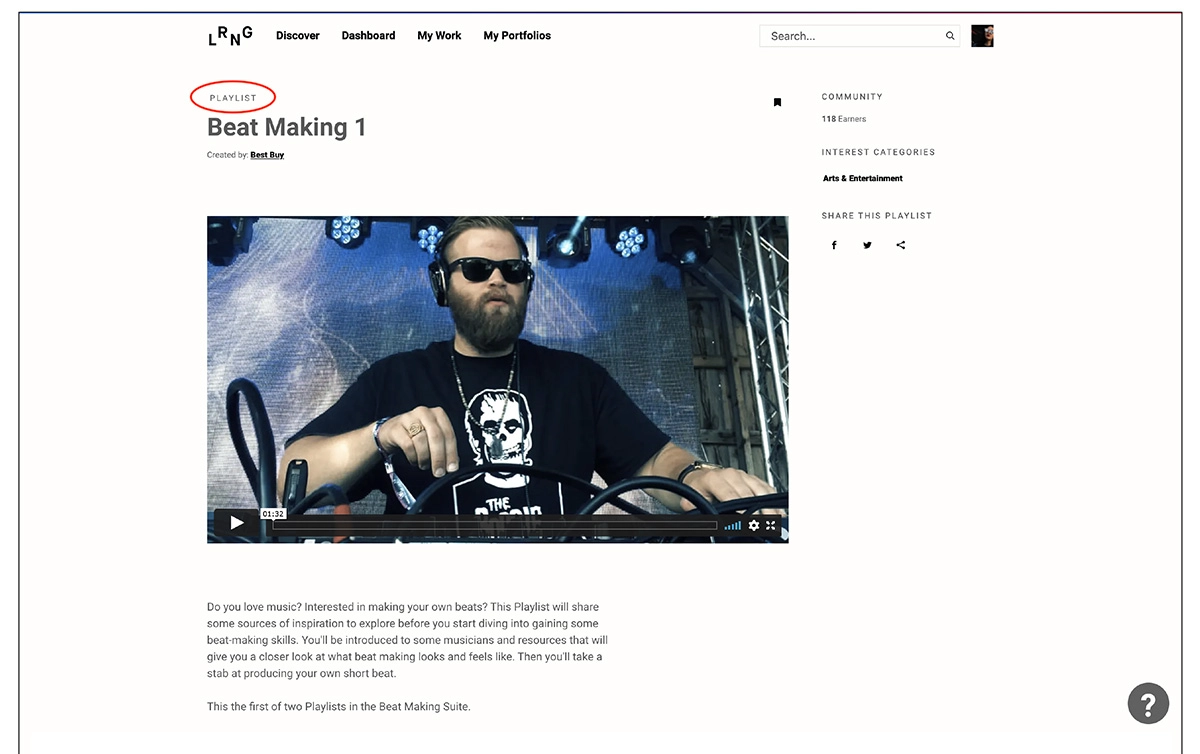

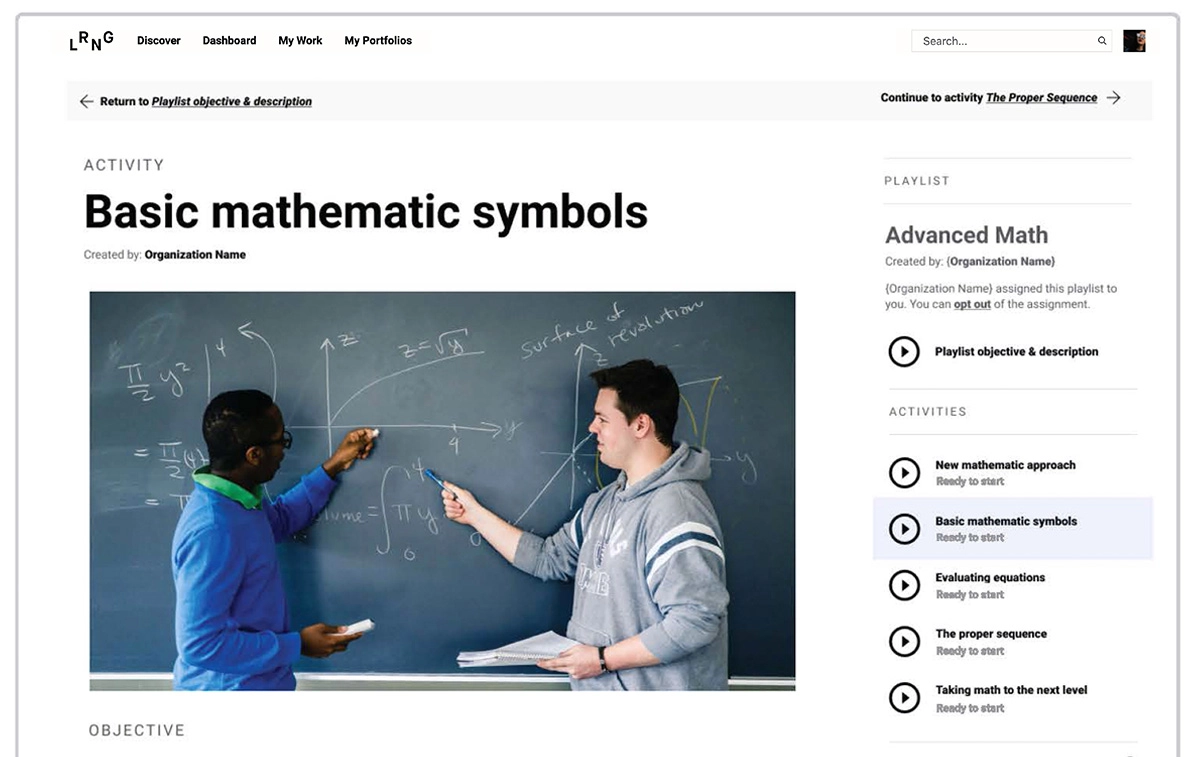

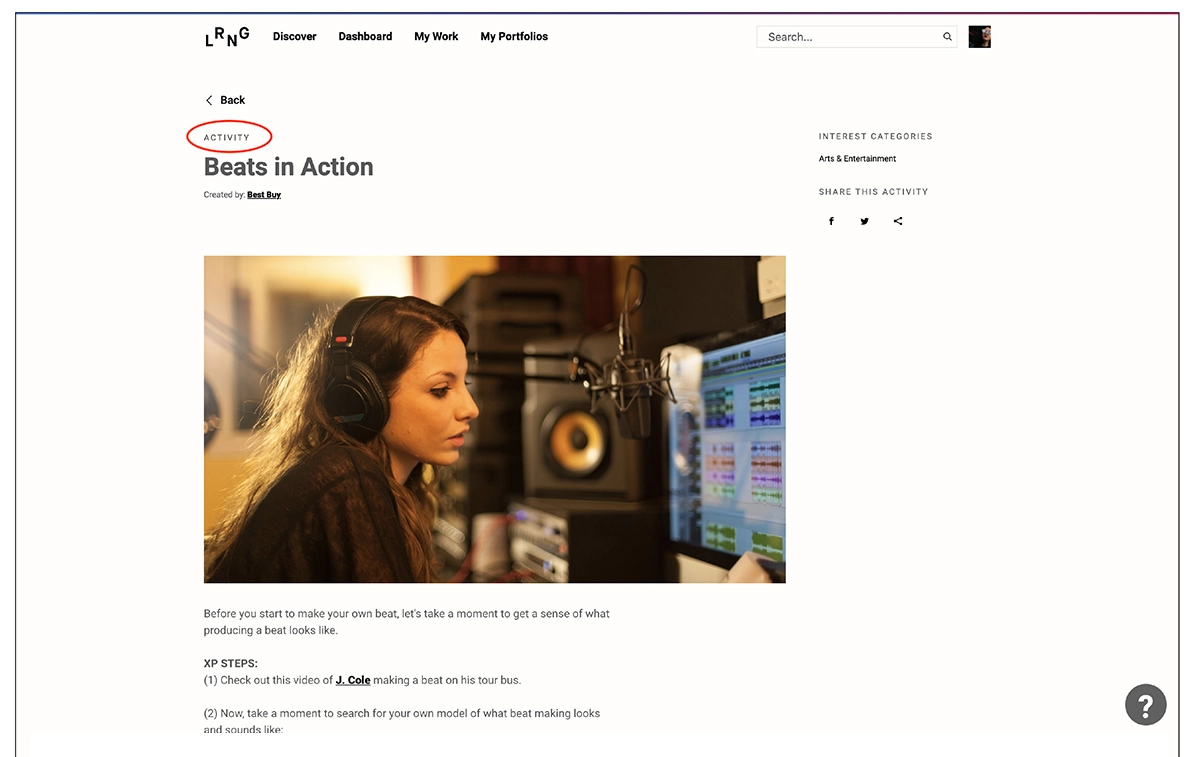

But you can't tell which playlist you're in. The playlist view and the activity view look almost identical — distinguished only by a small label in easily overlooked text. There's no indication of which playlist an activity belongs to. The only way to find out is to hit the back button.

You don't know how many activities you've completed, how many are left, or whether your submission was received, needs revision, or has been approved. You're navigating a credentialing system with no progress indicator.

The LRNG platform had evolved over time — features were added incrementally without reconsidering the cumulative experience.

A recent simplification had reduced component configurations from 24 to six, but the remaining variation didn't always communicate meaningful distinctions. When distinctions actually mattered — like telling the difference between a playlist and an activity within it — they were so subtle as to be imperceptible.

During a summer session, LRNG staff gathered learner feedback — high-level statements without follow-up research:

The feedback confirmed the interface needed improvement — but there was no budget for additional user research.

LRNG wanted these problems addressed before the next summer session — roughly three months away. There was no budget for user testing.

The team was 24 to 30 people, but the team's workflow meant designers, developers, and front-end engineers operated in separate lanes with limited collaboration.

And the approval process for learner work was complex enough that any interface change risked breaking workflows that staff depended on.

When I first joined the LRNG project, I spent time using the application myself and documented over 110 UX concerns. Many of them overlapped with the learner feedback.

Rather than treating the feedback as a standalone problem to solve, I connected it to the broader pattern of UX issues I'd already mapped — and used that combined evidence to build a strategic action plan that sequenced the work so each phase built on the last.

The action plan addressed all of it, organized into JIRA epics with clear dependencies so nothing had to be torn down to make room for what came next.

The action plan wasn't a list of features to build. It was a sequenced roadmap — each phase identified a corresponding JIRA epic, the feedback statements it addressed, and the goals of the work.

The first epic, for example — "Playlist UI Redesign" — addressed the most urgent feedback: learners getting lost in the interface.

I defined the goals explicitly: learners should be able to quickly identify where they are and what their progress is on the playlist view. This work should increase playlist submissions.

Each subsequent epic built on the previous one. The navigation redesign came before the approval workflow changes because the approval changes depended on learners understanding where they were first. The notification improvements came after both because they required a stable interface to reference.

This sequencing mattered because priorities had shifted mid-stream before.

By structuring the plan so each phase delivered standalone value while also preparing the ground for the next, I ensured that even if only part of the plan was implemented, the completed work would still improve the learner experience.

The plan went through several iterations with stakeholders and team members as I combined business considerations and technical limitations into each phase.

Once agreed upon, I organized all the JIRA epics and tickets to correspond with the plan.

Before I could redesign how learners tracked their progress, I needed to fully understand the approval workflow — the system by which learner submissions were reviewed, given feedback, approved, or sent back for revision.

The process was complex.

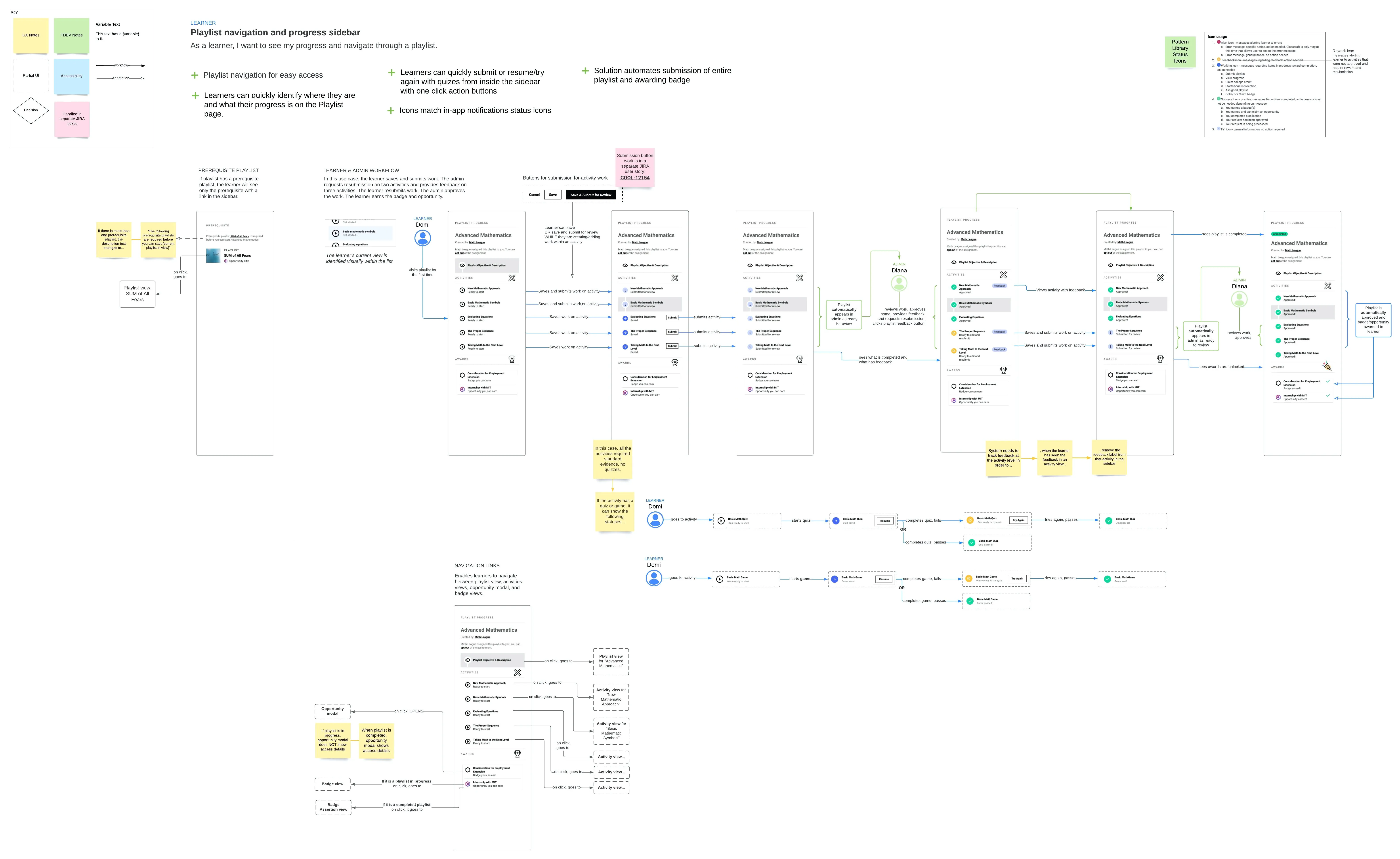

I created a workflow chart mapping every path a submission could take: initial submission, admin review, feedback, resubmission, approval, and the various states a learner could be in at each stage.

The chart served a dual purpose — it informed my design decisions and it became a negotiation tool.

When stakeholders argued that a change should be simple or easy, I'd pull up the workflow chart. The visual complexity was often all that was needed to redirect the conversation from assumptions to productive collaboration.

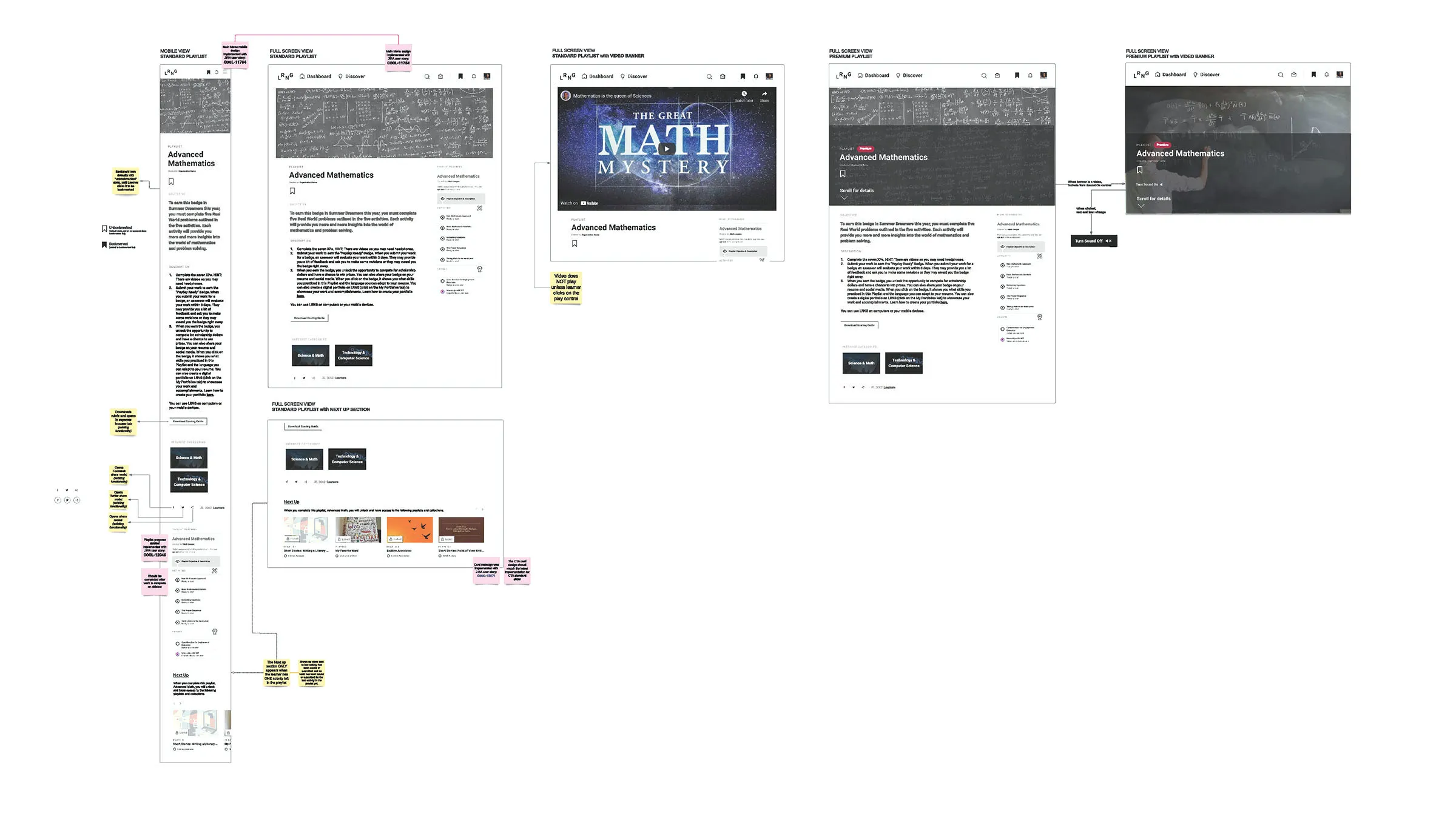

The core of the redesign was a sidebar navigation and progress indicator for playlists. The team had previously pitched this concept to LRNG — it had been approved but never implemented. I found the original work, reviewed everything I could, and evaluated why it had been deprioritized.

The previous design had the right instinct — a step-by-step concept with checkmarks for completed activities. But it hadn't been fully thought through.

It didn't account for how admins review and approve work. It didn't address what happens when a playlist has prerequisites — when you have to complete one playlist before starting another.

It didn't handle the difference between a standard playlist and a premium playlist, or playlists with video banners, or mega-badges that require completing multiple playlists.

I updated and expanded the designs, focusing on messaging that would guide learners through their educational journey.

Without a user testing budget, I iterated through multiple versions and shared them broadly — with my team, other Concentric Sky teams, and colleagues whose feedback could approximate what I couldn't get from learners directly.

Concentric Sky's portfolio included National Geographic, NASA, Encyclopedia Britannica, and PBS — the expertise in the building was real, even if it wasn't a substitute for dedicated research.

I built the interface designs in Figma but documented the user journey in Lucidchart.

The Lucidchart diagrams served as the single source of truth for each JIRA ticket — showing the sidebar design alongside the user's journey through it, so stakeholders and developers could visually trace how the interface changed with each step or situation.

The sidebar showed learners what they'd completed, what they'd submitted, what had received feedback, and what remained. It gave them the one thing the existing interface lacked entirely: a sense of where they were and how far they had to go.

After

After  Before

Before  After

After  Before

Before Incorporating the sidebar, I redesigned both the playlist and activity views with a focus on information hierarchy — making it visually obvious whether you were looking at a playlist or an activity within one.

I accounted for every variant: standard playlists in both mobile and desktop, playlists with video banners, premium playlists, and premium playlists with video banners.

While I would have normally recommended larger sweeping changes to the design, the timeline demanded pragmatism. I made meaningful adjustments to the design pattern library while still utilizing existing components — giving developers familiar building blocks that could be implemented quickly rather than requiring them to learn an entirely new system.

When I joined, JIRA tickets were assembled by multiple contributors — UX requirements, design links, and component references all added separately.

Developers had to piece together disconnected lists to understand the full experience.

I started creating comprehensive Lucidchart documents that unified everything — UX requirements, design specifications, interaction states, responsive behavior, and acceptance criteria — into a single, cohesive visual. Developers could open one document and see the complete picture: what to build, how it should look at different sizes, how it should behave with different content types, and what the acceptance criteria demanded.

This wasn't just about developer convenience. It was about accountability. When every decision lives in one place, there's no ambiguity about what was agreed upon. No one picks between conflicting references. No one guesses.

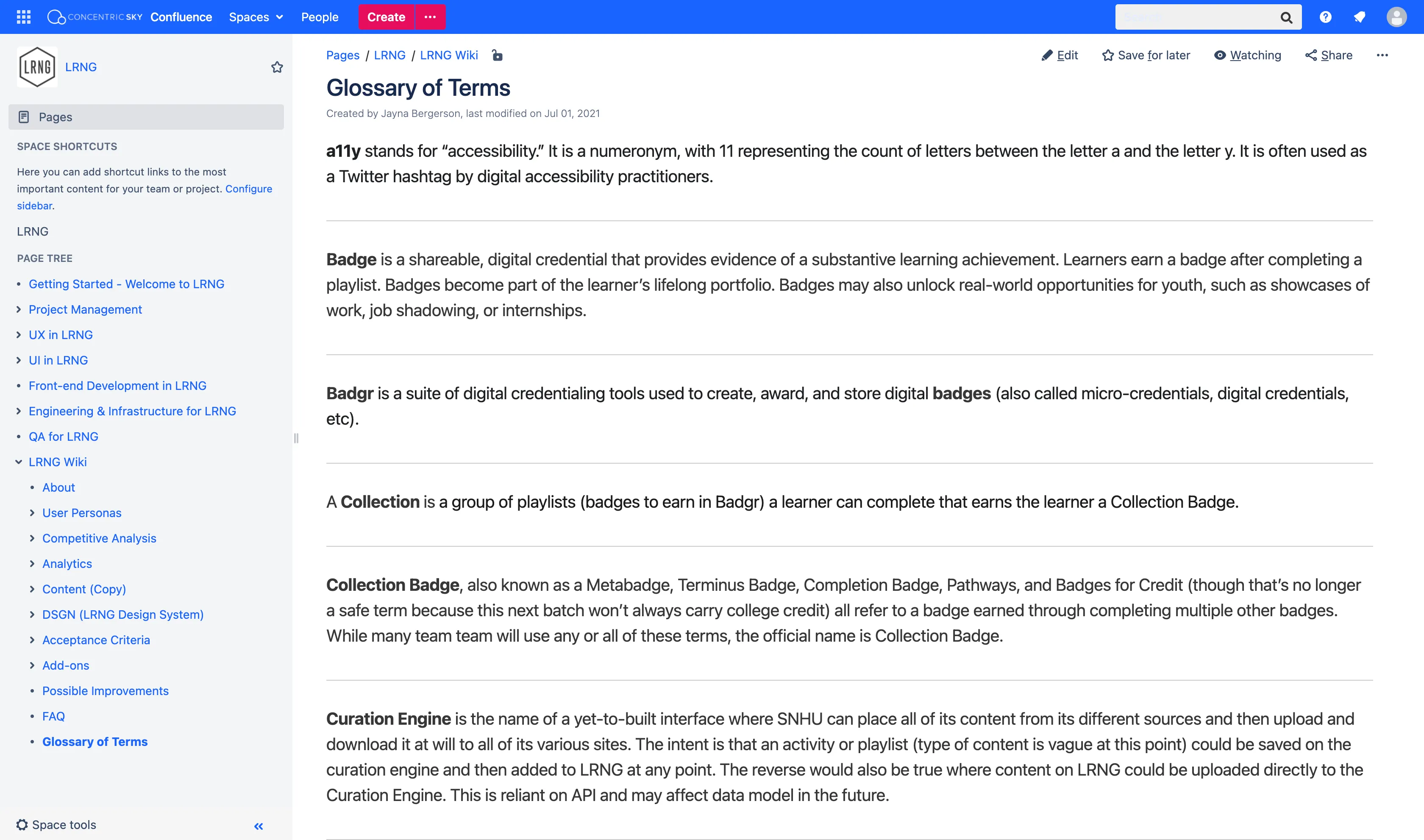

I also recognized early that design system specs, analytics reports, and consistency requirements were scattered across different locations — not easily accessible to the full team.

I started and maintained a documentation practice in Confluence — a central repository where all team members could view, edit, and contribute. It served onboarding, decision-making, and long-term consistency.

Design-phase errors dropped by an estimated 60% — a figure provided by the project manager, reflecting how the documentation practices, Lucidchart source-of-truth approach, and detailed acceptance criteria eliminated the guesswork that had previously caused rework.

The strategic action plan reframed how the team organized work. Before I joined, the LRNG project averaged two to three features per year. During my time on the project, I worked on over 22 features, seven epics, and two milestones — a pace enabled by the sequenced roadmap and documentation infrastructure I established.

LRNG staff and associates enthusiastically supported the redesign. The playlist navigation and progress sidebar directly addressed every piece of learner feedback that had prompted the engagement.

The outcome I can't measure is the one that matters most: whether learners would have found their way more easily.

The feedback was clear, the diagnosis was sound, and the designs addressed every concern that had been raised. But without implementation, it remains a plan rather than a result.

Unfortunately, the plans were not fully implemented — only one of the five epics was started before a last-minute shift in priorities and budget limitations put the work on hold indefinitely. The project closed in 2021.

If I were to do this engagement again, I'd push harder to get even a minimal version of the sidebar into production during the first epic — something learners could interact with during the next summer session, even if it only showed basic progress.

A shipped fragment that helps 72,000 learners navigate their playlists is worth more than a comprehensive plan that never reaches them.

I'd also find ways to get learner feedback without a formal research budget — lightweight methods like hallway testing with internal team members who matched the age demographic, or asynchronous feedback from the summer program coordinators who observed learners daily.

The expertise at Concentric Sky was valuable, but nothing replaces hearing from the people the design is meant to serve. ▪️

All work © Jayna Bergerson unless otherwise noted.